Listen to the article

The debut of ChatGPT on 30 November 2022, marked a pivotal moment in the evolution of artificial intelligence (AI).

Developed by OpenAI, ChatGPT quickly captured the world’s attention with its ability to generate human-quality text, translate languages, write different kinds of creative content, and answer questions in an informative way.

Despite its rapid adoption, with an estimated 100 million users weekly, the widespread utilisation of ChatGPT has shed light on critical concerns. These issues encompass potential risks to national security, encompassing worries related to disinformation, fraud, intellectual property conflicts, and discriminatory implications.

Security Landscape: The prevalent use of AI models like ChatGPT has brought cybersecurity concerns to the forefront, emphasising the critical need for robust measures to thwart potential threats to national security.

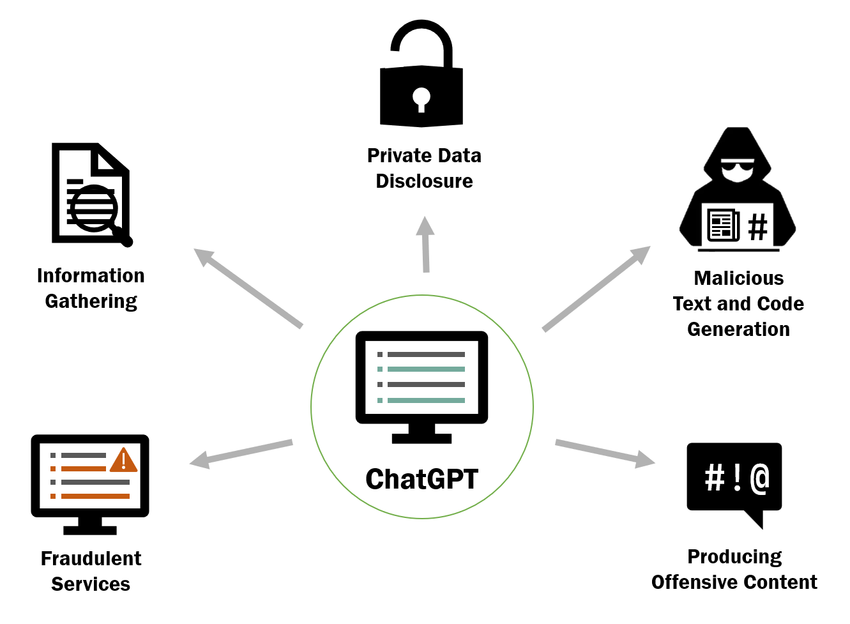

Security Breaches and Emerging Threats: Security incidents have exposed vulnerabilities in ChatGPT, leading to breaches that compromised users’ chat history.

- The sophistication and popularity of these language models make them prime targets for potential cyber threats.

- Scammers exploit ChatGPT’s features to mimic celebrities’ writing styles, deceiving individuals into sharing personal information for criminal purposes like impersonation and phishing scams.

- Cybersecurity experts have noted a rise in sophisticated AI-generated messages, making it difficult for individuals to discern genuine content from fraudulent ones.

The continuous evolution of chatbots introduces fresh cyber threats, stemming from their increasingly sophisticated language capabilities and widespread popularity. Consequently, this technology becomes a prominent target for exploitation as an attack vector.

Risks to Data Security: Amidst these emerging threats, users must also be aware of the risks associated with information provided to ChatGPT.

- When users upload data to ChatGPT, they grant the model permission to use that data for training purposes, especially if they are using a free version.

- It’s extremely easy to feed ChatGPT your private information by mistake, leading to the exposure of sensitive data if users are not careful about what they upload.

Business Response: While recognising the potential benefits of AI, certain companies have taken proactive measures by either pausing or restricting the use of AI tools such as ChatGPT over data security risks.

- US Space Force pauses use of AI tools like ChatGPT

- Apple restricts use of OpenAI’s ChatGPT for employees

- Samsung bans staff’s AI use after spotting ChatGPT data leak

Disinformation Challenge: ChatGPT generates seemingly definitive content without revealing sources, presenting a significant hurdle in combating disinformation.

- Jessica Cecil wrote “With a ChatGPT response, the reader has no idea if the source is the BBC, QAnon or a Russian bot.”

- This requires urgent collaboration among tech platforms, news agencies, and governments for responsible, fact-based information dissemination online.

Policy Development: The development of AI technologies has encouraged conversations on establishing regulatory frameworks to effectively address the societal and security implications stemming from AI technology.

- There have been ongoing discussions within US Congress and a White House executive order on the on the safe, secure, and trustworthy development and use of AI.

- Recently, Britain’s National Cyber Security Centre, alongside its US counterpart CISA, publishing ground breaking guidelines on AI security, with the support of 17 other countries.

Future Prospects: As ChatGPT celebrates its first year amid challenges and successes, it becomes increasingly apparent that AI’s national security implications are multidimensional, demanding a comprehensive approach involving policymakers, industry leaders, and the public to navigate this evolving landscape.